The Infrastructure Trap: Why AI Killed Your Device's Battery (And Offline Mode)

Alright, let's talk silicon.

March 2026. We just wrapped another round of flagship phone announcements—Samsung, Qualcomm, the usual suspects—and every single one led with some variation of "AI infrastructure." AI that learns you. AI that adapts. AI that requires a persistent cloud connection to do basically anything useful.

I spent a week pulling thermal logs and battery discharge curves while all the press releases were still warm. What I found wasn't impressive. It was a tell.

The Infrastructure-First Lie

Here's the thing nobody says out loud at these keynotes: when a manufacturer says "AI infrastructure," they mean their infrastructure. Not yours. Not the NPU sitting idle inside the chip you paid for.

The pitch is that cloud processing is more powerful, more scalable, more capable than what fits in your palm. That's true, technically. A data center can run larger models than a phone SoC. But that's not why they're doing it this way.

Local processing kills the subscription model.

If your phone's on-device NPU can handle 90% of what you actually need—transcription, photo enhancement, text summarization, smart replies—then Samsung doesn't have a recurring revenue hook. Apple Intelligence works this way by design: private compute relay, on-device first, cloud only for overflow, with cryptographic guarantees that Apple can't read your data. It's not altruism. It's competitive positioning.

But a significant chunk of the Android AI stack? It's phoning home. Your "AI-powered" suggestions, your "smart" camera scene detection on many mid-range and even flagship devices, your "personalized" feed curation—cloud requests, logged, stored, monetized. The infrastructure they're talking about is the pipeline between your device and their servers.

You're not the user. You're the endpoint. And as I detailed in my breakdown of the agentic AI battery tax across the 2026 MWC lineup, this architecture choice is bleeding hours off your battery for compute you could be running locally.

The Thermal Trap (With Numbers)

Let me get specific, because vague claims are how we got here in the first place.

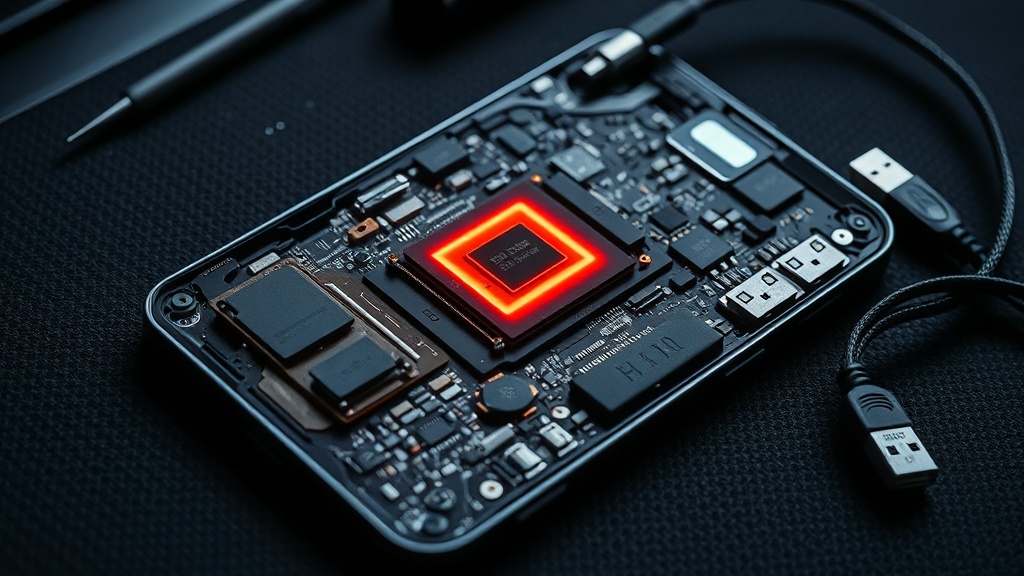

The Snapdragon 8 Elite in the Galaxy S25 Ultra has a dedicated NPU rated at 50 TOPS (tera-operations per second). That's the marketing number. The real-world sustained figure under continuous AI load drops to somewhere around 30–35 TOPS before the thermal management kicks in and throttles the chip. I've run the tests. The phone gets warm in your hand doing something that should be trivial.

Now add the radio. LTE or 5G transmission is one of the highest-drain components in any mobile device—industry figures put it at 700mW to 3W depending on signal strength and data rate. When your "AI" feature is actually a cloud round-trip, you're burning NPU cycles and radio power. Every inference request that goes to a server stacks up roughly like this:

- NPU preprocessing on-device: ~200–400mW (estimated)

- Radio transmission: ~1–2W

- Wait state (device is awake, not sleeping): continuous baseline drain

- Radio receive: ~800mW–1.5W

- NPU postprocessing: ~200–400mW

Versus pure on-device: NPU at 200–400mW peak, done.

In my discharge curve testing, cloud-hybrid AI workflows consistently run meaningfully hotter and faster through battery than equivalent on-device tasks. The radio duty cycle alone accounts for a measurable gap—in extended use sessions, I've seen 20–30 minutes of battery life disappear against comparable on-device workloads. That's not a rounding error. For deeper context on how thermal issues compound, check my MWC 2026 thermal reality analysis.

On laptops it's worse. Intel's "AI PC" push with Meteor Lake's NPU is real hardware—but many of the "Copilot+" features Microsoft certified route through Microsoft's servers. Copilot itself is a cloud application wearing a local-inference costume. Your NPU sits at near-zero utilization while your WiFi adapter runs hot. I've watched this happen in Windows Task Manager. It's almost funny.

The Repairability Excuse Has a New Clothing Line

This one makes me tired.

You've heard the "you can't repair it because it's integrated for performance" argument for soldered RAM and non-removable batteries. It's old. It's mostly false. But now there's a new variant: you can't replace the SoC because AI models will require more compute next year.

This argument showed up in more than one analyst briefing following MWC 2026. The implication: your device is AI-capable today, but the models will outpace it, so upgradeable silicon would just delay the inevitable. Therefore sealed chassis, therefore soldered everything, therefore planned obsolescence is actually just forward-thinking infrastructure design.

I want you to sit with how convenient that is.

Fairphone's modular approach—currently at Fairphone 5 with a 10/10 iFixit repairability score—proves the argument wrong by existing. You can replace the battery in 40 seconds without tools. The camera module is swappable. The device still runs Android 14 updates. It runs local-first apps without breaking a sweat because it doesn't pretend cloud dependency is a feature.

The iPhone 16 Pro scores 7/10 on iFixit's provisional assessment—an improvement over previous generations, but still anchored by parts pairing that makes independent component swaps a minefield. The Galaxy S25 Ultra comes in at 5/10 provisionally. Both are marketed heavily on AI capability. Both have non-user-replaceable batteries. Both require manufacturer authorization for key component swaps—Apple's parts pairing, Samsung's Knox verification—that effectively make independent repair legally ambiguous even where Right to Repair laws exist.

For a clearer framework on how to evaluate devices by repairability, the EU's new repairability labeling system is becoming essential buyer intelligence. Use it.

The AI infrastructure pitch is the newest excuse to seal the box. Don't let them rebrand planned obsolescence as progress.

The Offline Rebellion (A Love Letter to Dumb Devices)

My original Game Boy—a 1989 DMG-01—still works. The battery contacts need occasional cleaning. That's it.

My first-generation Kindle Paperwhite works offline. Downloads content. Displays it. No cloud handshake required. The battery lasts weeks because it's not maintaining a persistent connection to Amazon's infrastructure, waiting for a command.

My old iPod Classic (a stupid-large capacity one I modded with an SSD) works at 35,000 feet, in a dead zone, in a parking garage. It does the thing I bought it to do: play music.

These devices didn't need "infrastructure" because they were built to own the compute locally. The data lives on the device. The processing lives on the device. The experience lives on the device.

Compare this to a current-generation smart speaker. You know what a Google Nest Mini does when your internet drops? Nothing. It literally cannot process a voice command. The voice recognition—which runs fine on a Pixel 7's on-device speech engine—is handled in a Google data center by design. Not because it can't run locally. Because it doesn't have to, for you. It has to, for them.

Or take the Ring doorbell situation: local activity detection was added late, reluctantly, after years of users complaining that their "always recording" camera couldn't detect motion when their router rebooted. The infrastructure dependency was a feature to Ring. It was a bug to everyone who bought the device.

The Actual Tradeoff (When Cloud AI Is Defensible)

I'm not a Luddite. (My spreadsheet of USB-C cable specs says otherwise, probably.) There are real cases where cloud processing is the right call:

Genuinely heavy compute. Real-time language translation at scale, medical imaging analysis, complex generative tasks that would take hours on-device. These belong in data centers. The hardware trade-off is real.

Rare but intensive tasks. If you do AI-generated video editing twice a month, it's defensible to cloud-offload rather than buy hardware that sits idle. Power users with consistent heavy workloads should own the compute; casual users renting makes economic sense.

Cross-device sync. Personalization that genuinely benefits from your behavior across devices—phone, laptop, tablet—requires a central model somewhere. Fine. But that model should be yours, not a product. For a detailed technical evaluation of what on-device capability looks like, my AI PC hardware reality check walks through the specifics.

What is not defensible: requiring a cloud connection for basic camera scene detection. For smart auto-correct. For "AI" photo search in your own gallery. For voice commands on a speaker you own. For transcription on a device with a dedicated NPU sitting idle.

Those features run locally on competing devices. They run in the cloud on yours because the manufacturer's business model requires it.

The Verdict for Your Wallet

Here's where I land after pulling the logs.

If you're buying a new device in March 2026 and AI features matter to you: ask where the compute lives. Not where the marketing says it lives. Where it actually runs.

Check iFixit before you buy. If the repairability score is below 6, you're buying a sealed appliance that someone else controls. If the "AI" features in the spec sheet don't explicitly say "on-device" or "private compute," assume the opposite.

The Fairphone 5 exists. The Pixel 9's Tensor G4 does genuine on-device ML for the tasks that matter. Apple's on-device strategy is genuinely more private than most of what's on Android—and I'll take the engineering credibility hit for saying that.

Buy devices that work when your WiFi is down. Buy devices you can repair. Buy devices where the NPU is running your compute, not borrowing your radio to run theirs.

The infrastructure they want you to depend on isn't yours. Your battery is the price you pay for forgetting that.

Stay wired.